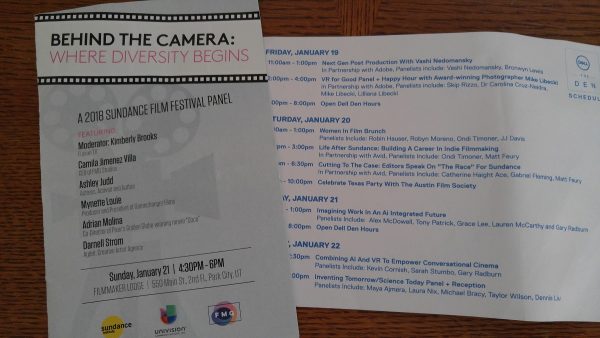

When most people think of the Sundance Film Festival they think of movies. Independent movies, to be exact. And that is a big part of this event, which is held every January in Park City, Utah. But when my film student high school kid and I attended a few weeks ago we hit as many forums and workshops as we did films.

We did our fair share of playing the complicated game of wait listing tickets on the new app and waiting in lines to get into the movies we wanted to see (more on navigating the complicated matrix of Sundance in another post). And we saw some really great films (as well as some not so great entries). But it was a nice surprise to us that the gem of Sundance lies in the powerful, intimate forums and panels.

Throughout the festival schedule you can find forums on a variety of subjects, and show up to find the stage packed with folks from the top of the industry. They spend an hour to an hour and a half discussing interesting topics, about film, and about the future of the industry.

One panel we attended, titled “Imagining Work in an AI Integrated Future,” was an intense, deep discussion about how these film makers predicted the future to be. Three of the panelists had been working on a yearlong study, imaging what our world, or more specifically the city of LA, would look like in 20 years, in the year 2037.

The main idea behind the project was that the future will be what we put our focus on, what we spend our time creating, now. The projects (film and otherwise) that we focus on, and the ideas we focus on, are destined to be our future. So, by imagining what we want that future to look like, we can create the technology, and use new technology, to get us to that place.

In his opening remarks, the moderator pointed out that he had recently had an evening free and decided to stream a television show. As he searched through the endless choices, of movies and television, on his streaming services, he found that a large majority of his choices were dark, negative shows. Think Black Mirror. He wanted something happy and light. We all laughed when he said he eventually landed on The Marvelous Ms. Maisel.

But his point was well taken. A lot of the streaming video entertainment we choose from, what is being made for public consumption, is dark in nature. The discussion on the panel that day in Park City was about how we can chose to paint a different narrative. We can imagine the use of our new technologies being put toward positive projects and making our lives better.

I was especially drawn to a lively discussion about using AI (artificial intelligence) in our everyday lives. We are already using AI type devices, a prime example being the record number of Google Home and Alexa devices that were purchased in the past holiday season. It’s been easily accepted that we will place a small object in the center of our homes that holds seemingly unlimited information and assistance. It doesn’t matter that it’s not a robot shape, it’s artificial intelligence living in our homes.

Taking it a step further, the concept of professions using AI type devices drew me in. It was suggested that in the near future many professional offices will contain some type of AI. Think an attorney who can turn to a device in his office that has all the legal information available condensed into one device. Instead of digging through legal cases and precedents, the AI produces those searches in a matter of minutes. Or a doctor who doesn’t need to search for answers in her tough cases, and can turn to an AI device in her office that contains medical information for every specialty, not just the one she practices in.

Having this dynamic in an office situation would not just make a professional more accurate in their work, but would make it possible for the shift to swing from service labor to emotional labor. A doctor has more time to really get to know a patient, spending quality time really listening, because she no longer has to spend time doing research.

I have a son who has an undiagnosed, chronic health condition that is drastically hijacking his future. The idea that we could stop hopping from specialist to specialist is exciting to me. I’m ready to walk into a doctor’s office and have him punch in my son’s symptoms, and walk out with some answer that most doctors would never think of, or even be aware of. The AI device could search its massive database and change my son’s life.

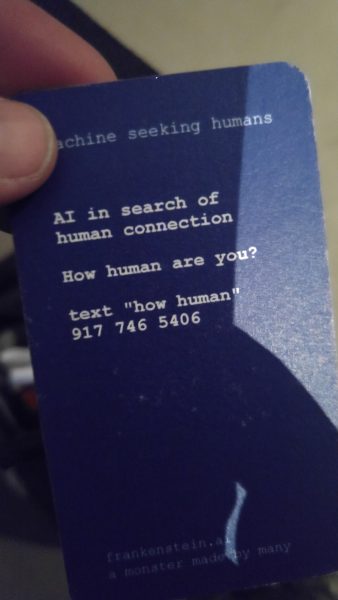

One more note on the concept of AI. My son and I happened to stumble into a very unique experience where the goal was to get as many people as possible to input what they feel makes a human, in an attempt to create an actual AI that had human qualities. It was called Frankenstein AI: A Monster Made by Many, a nod to the famous Mary Shelley book, where a young scientist creates an artificial human and it creates unique issues. We entered a quiet room with a handful of people then sat face to face with a stranger and answered questions, which had to do with experiences in our lives. This information was then saved and put together with many others, and by the end of the festival the team had an AI that took the human characteristics from the combined input through the week. Since we didn’t stay to the end of the festival we didn’t get to see the end product. But it was exciting to be a part of the process.

Altered Realities

Another futuristic push in the forum topics at Sundance was the advancements in altered reality. My son was able to try out an immersive reality set up, the newest version of virtual reality, and a really fascinating augmented reality. In augmented reality he wore a mask that was more like a visor, with a clear mask instead of the closed mask used in virtual reality. He could see everything around him, yet at the same time his eyes were seeing virtual objects. He quickly learned how to take things off a shelf (in this case, a brain, then an eyeball) and manipulate them. I could see what he was seeing, on a computer screen in front of him.

In an earlier forum we had listened to experts in the field discuss how they are using these technologies to change how films are made. The Unity Company has developed new software that has been used by Martin Allais and Nico Casavecchia in their creation of a project called Battlescar. They showed us, in samples on the screen, how the new software allowed them to adjust most of the aspects that used to be corrected in the long post edit process. Instead of shooting scenes, then sending them to months of post edit, the editing could be done on the spot.

In one sample we saw an army of robots marching across the screen. Across the bottom of the screen were the editing tools, and by making a few clicks, the director was able to change where the sun was in the sky, which automatically changed all the shadows in the scene. Instead of figuring out three months later that what they really wanted was an afternoon scene, and having to go back and re shoot specific scenes, corrections are made on the spot. All of this is happening as the film is being shot. There is no more attitude of “we’ll fix that later.”

The reason this was important to these film makers was the fact that it allowed them to keep the story progress as a priority. They were thrilled that they could concentrate almost fully on keeping the heart and soul of their story moving forward, without worrying about the technical issues, like lighting.

One of the directors brought up a fascinating idea that parallels the stage we are in now with where we were a hundred years ago. Our first movies were silent. Then in the twenties, sound started to be introduced. But getting sound in a movie changed the way scenes were shot. The equipment needed to create sound limited the flexibility of a cameraman. So, in a sense, technology had to move backwards for a bit, before it could move forward.

Eventually new equipment became easier to work with and the film industry continued on a forward path. This is similar to where the panelists felt we are right now, in introducing these new advancements to film making. The ideas are very exciting. The end products are inspiring. But to become seamlessly integrated, and to shed the bulky VR masks that are required in some of these steps, we are still at a baby stage.

I found myself thinking, many times over the course of our Sundance experiences, that my son, who is a 17 year old future film maker, will look back over that experience and be amazed that we were fascinated by such simplistic ideas. By the time he’s fully entrenched in the industry, many of these kinks will already be worked out, and the pictures we took in Park City will make him laugh. He’ll be using much more sophisticated programs and equipment, and won’t even think about how truly amazing it is.

As I’ve been working on this post I have suddenly started to be bombarded with mentions of AI and VR in my everyday life. Many of the commercials, during the Super Bowl, and now the Olympics, feature VR or AI of some kind. Many shows I’m watching are either using AI and VR or referring to it. Ready Player One, the upcoming Steven Spielberg movie based on the Ernest Cline novel, is about a civilization that uses VR to save their existence. I found out just this week that a friend’s husband has started his own company, called Machinify, that helps businesses integrate AI into their companies, to become more efficient. AI and VR are not just our future, they are our present.

If you get a chance to head to Park City next January to take in some of the wonderful opportunities Sundance has to offer, don’t forget to look up the forums. You don’t have to be a film buff to get a lot out of the discussions. And if you’d like to see the panels and forums I attended, and many more that happened in those 10 days of the festival, head over to the YouTube Sundance channel. The beauty of the age we live in is that you can now access a lot of the greatest stuff that went down, from the comfort of your favorite lounge chair. Welcome to the future.

I love participating in the Sundance Film Festival since learning about the latest innovations that are going to be introduced in the entertainment world is my passion. I have participated in several film festivals and wrote an amazing essay based on my experience, here I also would like to thanks to the EduBirdie source which helped me in reading real reviews about writing service providers and helped me to trust on writer who assisted me in writing that essay.